A Luddite's Lament

But really, AI isn't innocuous

Once again, I’m pushing AI out of the way as it tries to write my thoughts before I do. Call me a Luddite, but AI is a nosy little deity that constantly interrupts to save me from ignorance. Its comments arrive fast and demand immediate attention. Reading an essay online, AI keeps trying to explain it to me as I do my best to ignore the texts popping up onscreen. How is it that the ‘smarter’ technology becomes, the harder I have to work for autonomy?

Yes, there are ways to mitigate AI’s presence. I press those buttons, but nothing gets rid of it entirely. It finds ways to return as if it just can’t fathom that I really meant to turn it off.

There are many places where AI makes sense: science, finance, medical research, to name a few. And I’m no purist. I use technologies that rely heavily on AI: Google, Substack, and Facebook, let my doctor take AI notes, and will turn on Grammarly. However, a thing that’s helpful in one place does not make it helpful in all. If I were Empress of the World, I’d banish it from the creative arts altogether—stop it from churning out books and music and pieces of art in the style of so and so—and send the fake videos back into the Genie’s bottle. But we know that’s not going to happen.

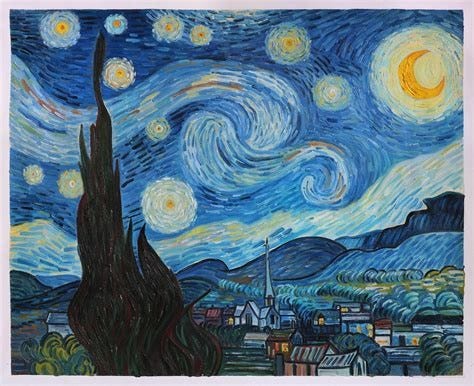

A lot of people love AI apps like ChatGPT. You may be one of them; they’re popular. But none of us can afford to forget the behind-the-scenes costs. These apps are information parasites. They steal the faces and voices of actors and the published work of writers, most often without permission or payment. And you may say, artists have been stealing from other artists for centuries. Painters painting Van Gogh’s The Starry Night and Degas’ ballerinas, writers writing stories in the style of Flannery O’Connor or Anton Chekhov.

But I say artists learning their craft by studying the masters—trying their hand at Van Gogh’s colors and brushstrokes, close-reading Chekhov’s stories to learn about narrative structure and efficient character development—is different. The student isn’t gobbling up Chekhov’s work so he can puke it up for someone else. ChatGPT, write me a story in the style of Anton Chekhov. No, he’s studying Chekhov because Chekhov has much to teach about writing good stories.

They say one of the biggest concerns with AI is when lonely people form emotional attachments to it, a risky one-sided business. AI is notorious for giving users more of whatever it is they already have. If you say blond people are mean, AI will offer supporting evidence. But how does that lead to greater insight, empathy, or learning? Isn’t this worth paying attention to, especially if it’s affecting kids and teens?

And we shouldn’t forget AI’s impact on the environment. A 100-word question to ChatGPT uses approximately one bottle of water on the backend. That doesn’t seem like much until you multiply it by the millions of questions asked every day. With the expansion of AI, companies are rushing to build data centers to support new uses. These facilities use huge amounts of water to cool their equipment. According to an article in EthicalGeo by Charlotte Jennings, The average 100-megawatt data center consumes about 2 million liters of water a day, equivalent to the water consumption of 6,500 American households. To make matters worse, as Jennings and others warn, data centers are often built in already water-stressed areas, putting surrounding communities and environments at risk.

Which brings me to AI guardrails, or should I say the lack of them. If you look up AI Guardrails on Google, you’ll get dozens of articles about the need for rules. But with the horse already running the race, it’s a catch-up game at best. And in the meantime, Luddites like me are shouting at myriad little AI helpers to go away if they’re being polite, and cursing them out if they’re not. All I’m really saying here is that AI isn’t innocuous. It isn’t a party trick. We need to be careful.

Such despair. As a species, we dream up tools to misuse. Tools that can find new galaxies in a snap, and work infinities of chemical operations into targeted cures. I sit every night on a couch with an MD who would have given his eyeteeth for treatments his patients died not having. But instead we choose money, power, killing, inertia, addiction. Never mind AI, can we fix ourselves? History seems to say No, and so, like you, I advocate for guardrails and norms and laws and consequences. All the things we know how to do to protect ourselves from our worst impulses. There was a wonderful local feature in the San Francisco Chronicle today about the Centennial Light Bulb in Livermore. It's been glowing since 1901! Its inventor (French) came up with a better design. It dominated the market until the company was sold to General Electric in 1912 and they buried it. Lightbulbs that burn for centuries. Paintings rendered by human hands. Words conjured by human minds. It's so obvious that that's an in-itself. Not shortcuts but friction, challenge, creativity. https://www.sfchronicle.com/eastbay/article/centennial-lightbulb-fire-station-livermore-21329820.php

Loved this Randall which is why I have almost ditched audiobooks and actually physically read more now and like you, turn off AI's unsolicited help. I don't know if we're luddites per se, but we're writers so imagination is our preferred language and analytical dynamics is the world we live in. Great piece, thanks for this.